Florida mulling charging ChatGPT in murder case

12 May 2026

Skills-Based Hiring: A Strategic Guide For HR Pros And HR Leaders

Explore skills-based hiring for HR leaders and HR pros. Learn what skills-based hiring is, its definition, benefits, and how to implement skill-based recruitment strategies for better talent decisions and workforce outcomes. This post was first published on eLearning Industry .

11 May 2026

The Implications of Cyber-Physical Security Convergence in Higher Education

The lines that once clearly separated on-campus physical security systems from cybersecurity are increasingly blurred, due to many factors, not least of which is the technology itself. The advent of Internet of Things technology, followed quickly by smart security cameras and their enabling devices, alongside a host of other networked facilities technologies such as access card readers and biometric devices, has had a significant impact on today’s college campuses. All of these tools not only increase an institution’s attack surface, they also require sophisticated management and oversight,…

11 May 2026

The Authoring Tool Market Has Changed: How To Choose Wisely In 2026

Cloud-first platforms, rising costs, stricter security requirements, and increasing AI expectations are transforming how L&D teams select course creation software. Let's explore how to choose your first authoring tool (or switch from your current one) with more confidence and fewer costly surprises. This post was first published on eLearning Industry .

11 May 2026

An AI Adoption Imperative: Centralized Sources of Governed Truth

Strategies for enterprise teams who aim to build a data foundation to move the institution from AI experimentation to real-world execution.

11 May 2026

Why Organizations Misread Training Gaps

Organizations are increasing investment in training to address performance gaps, yet outcomes remain inconsistent, largely due to persistent confusion between abilities, skills, and competencies. This post was first published on eLearning Industry .

11 May 2026

Changing Work Flow of Schools

By: Riley Black Your feed is loaded with glowing reviews of the latest AI-powered teaching tools. They promise to save you time by assessing student work, creating customized curriculum, or writing multilingual parent newsletters. Your students have access to personalized AI tutors, automated writing and editing tools, podcast generators, research assistants, virtual labs and field trips, and multimodal textbooks. The bounty of educational technology is undeniable. There is just one problem. The workflow of school is unchanged. This means the loudly touted benefits of AI have not been felt. The core structure of what we would recognize as a ‘traditional’ K–12 classroom instruction has been in place for more than a century. It is so ingrained in our cultural practices that it is almost impossible to view objectively. We may need an outside observer to see it clearly. Educators who have worked with me over the decades have remarked on multiple occasions that I behave very much like Star Trek ’s Mr. Spock. I interact with humans but I don’t understand them. So let me embrace my inner Spock and describe, procedurally, what a school day looks like to a non-human observer. From there, we can reimagine what the workflow of school could look like if we really wanted to maximize the effectiveness of the AI tools we have at our disposal. What School Looks Like Now To: Epsilon Eridani Education Hive From: Humanshell Ross #ID 19195512 In Re: Terran Education Systems The instructional environment is highly structured and time-segmented. The earth school day is divided into discrete intervals, each governed by externally imposed signals such as bells or scheduled transitions. Human subjects identified as “students” move in coordinated groups between physical locations, while a designated authority figure, the “teacher,” remains fixed within a defined instructional space. The teacher initiates and terminates most activities, regulates pacing, and controls access to resources and discourse. Within the instructional interval, communication follows a patterned sequence. The teacher frequently occupies the role of primary information transmitter, delivering content verbally or through visual display systems. Students alternate between passive reception and prompted responses. These responses are often prompted through questions that have pre-established correct answers. Participation is unevenly distributed, with a subset of students responding publicly while others remain silent but are still subject to evaluation. Written artifacts, on paper or digital devices, are produced as evidence of engagement and comprehension. Behavioral norms are explicitly and implicitly enforced. Students are expected to maintain physical stillness for extended periods, orient their attention toward the teacher or assigned task, and regulate peer-to-peer interaction unless permitted. Compliance is monitored continuously. Deviations, such as unauthorized movement or communication, are corrected through verbal cues or other behavioral interventions. Positive reinforcement is occasionally applied, though correction is more frequent than affirmation in maintaining group order. Assessment mechanisms are embedded throughout the process. The teacher collects observable outputs, including verbal responses, written work, and task completion indicators, to infer internal cognitive states. These inferences are recorded and translated into quantitative measures. The accumulation of these measures appears to influence future opportunities and categorizations of student capability. The overall system exhibits characteristics of centralized control, standardized sequencing, and constrained interaction. Individual variation among students is present but is managed within the boundaries of the procedural framework. The system’s primary function is the transfer, rehearsal, and verification of knowledge and skills within a fixed temporal and spatial structure. Recommendation: Reallocate resources to more promising systems. What School Could Look Like That’s what an alien would see. This is what one nominally human expert would propose. The most immediate shift is the removal of synchronized time blocks and whole-group pacing. Learners no longer move through a fixed schedule; instead, they operate within a continuously adaptive learning environment. An AI orchestration layer monitors progress across competencies and dynamically assigns tasks, resources, and collaborators. Platforms such as Khan Academy’s Khanmigo , Muzzy Lane -powered simulation environments, or OpenAI-based tutoring agents ( Praktika.ai , for example) function as always-on guides, adjusting difficulty, prompting reflection, and surfacing misconceptions in real time. The day becomes a fluid progression of learning states rather than a series of clock-bound activities. The role of the teacher transforms into that of a systems designer and intervention specialist. Rather than delivering content, the teacher configures learning pathways, curates tools, and monitors real-time data across domains. Tools like Google Classroom analytics , Canvas with AI plugins , or custom dashboards built on top of OpenAI APIs provide visibility into student thinking, not just outputs. The teacher intervenes selectively, focusing on moments where human judgment, ethical reasoning, or emotional support is required. Instructional content is no longer delivered as static lessons but as interactive, multimodal experiences generated on demand. A learner studying ancient Egypt could enter a real-time simulation generated by platforms like Unreal Engine paired with generative AI. There, they interact with historically grounded characters powered by conversational models Instead of reading about irrigation systems, the learner experiments within a simulated Nile environment, testing variables and receiving immediate feedback. Knowledge acquisition becomes inseparable from application and experimentation. Assessment shifts from periodic evaluation to continuous evidence capture. Every interaction with an AI system generates data about reasoning processes, decision-making patterns, and persistence. Tools such as Turnitin’s AI writing analytics , ETS-style competency models , or emerging “ process-based assessment ” platforms track how a learner arrives at an answer, not just whether the answer is correct. Portfolios are automatically constructed, containing annotated transcripts, drafts, revisions, and reflections that demonstrate growth over time. Collaboration is restructured through intelligent grouping systems . AI agents analyze learner profiles and assemble teams based on complementary strengths, cultural perspectives, and working styles. Within these teams, each learner may be supported by a personalized AI copilot, such as a Gemini-based agent configured for specific roles like fact-checking, design critique, or ethical analysis. The human-to-human interaction remains central, but it is augmented by AI systems that scaffold and extend group cognition. The development of dispositions becomes explicit and measurable. Systems are designed to prompt metacognition, resilience, and ethical awareness. For example, an AI tutor may intentionally introduce ambiguity or conflicting information, requiring the learner to evaluate sources and justify decisions. Platforms aligned with frameworks like UNESCO’s AI competency standards or Digital Promise’s AI literacy model can embed these prompts directly into tasks, ensuring that habits of mind are cultivated alongside academic skills. Learning extends beyond institutional boundaries through persistent AI companions . These systems, accessible across devices, maintain continuity between formal and informal contexts. A learner might begin a project in a structured environment and continue refining it at home, in transit, or in a community setting, with the AI tracking progress and suggesting next steps. Tools like Notion AI , personal knowledge management systems, or customized GPT agents serve as long-term cognitive partners rather than task-specific assistants. The overall system operates less as a delivery mechanism and more as an adaptive ecosystem. Control is distributed, pathways are individualized, and feedback loops are immediate and continuous. The central function shifts from managing groups through standardized procedures to cultivating individuals within a network of intelligent tools, human relationships, and real-world applications. Paving the Cow Path In digital transformation, “ paving the cow path ” refers to automating a manual, inefficient process without first fixing the underlying logic. The core of this thinking is that while AI models are now smart enough to handle complex tasks, they are being bolted onto existing workflows rather than being used to transform them. This is surely the case in schools, which is why there are so many complaints about the lack of positive outcomes from the rapid and often uncoordinated implementation of AI. In some cases , this frustration has gone so far as to spark calls for a five-year moratorium. When I contemplate the dysfunctional nature of K-12 education my thinking becomes childish. As much as I like the analogy of “paving the cow path,” I always revert to an older analogy and a more ancient creature. The industrial workflow of K-12 teaching and learning can be likened to the fate of dinosaurs. The COVID-19 pandemic was the first asteroid to disrupt a timeless way of life. The birth of ChatGPT in November of 2022 was the second. And yet the dinosaur plods on, dimly aware that new environmental conditions might ensure its demise, but unable or unwilling to adapt. Schools are investing billions of dollars to introduce AI learning tools into schools. We are creating national policies that require a K-12 scope and sequence of AI literacy. And yet we are unwilling to make the hard political choices needed to ensure that this investment is worth the effort. Riley Black, author of The Last Days of the Dinosaurs: An Asteroid, Extinction and the Beginning of Our World , offers the last word: “Beginnings need endings, a lesson that we can either hold carefully or that we can deny until it finds us.” The post Changing Work Flow of Schools appeared first on Getting Smart .

11 May 2026

ALIGN: A Research-Informed Framework For Making EdTech Implementation Improve Learning

ALIGN is a research-informed EdTech framework that helps schools turn technology adoption into learning impact through five pillars: Assessment, Logistics, Integration, Growth, and Navigation. This post was first published on eLearning Industry .

11 May 2026

What higher ed can do about getting research into the K-12 classroom

Key points: Educators need research that is accessible, relevant, and actionable How professional learning transformed our teachers A new PLC model that builds collective efficacy and fights teacher burnout For more on K-12 research, visit eSN’s Educational Leadership hub Educational research has never been more abundant, yet its impact on classroom practice remains uneven at best. While universities continue to produce studies on instructional strategies, student outcomes, and emerging technologies, many K-12 educators rarely engage with this work in meaningful ways. The issue is not due to a lack of interest. It is a failure of access, translation, and alignment. Recent survey data from 263 K-12 educators highlights a persistent gap between research production and classroom application. While educators overwhelmingly value research, only a small percentage engage with it regularly, and many turn instead to informal sources such as blogs, social media, and peer conversations for guidance. This disconnect raises an important question for higher education: If research is not being used, what must change? The real barriers are structural, not motivational One of the most consistent findings is that educators are not resistant to research; practicing educators are constrained by their professional environments. Time remains the most significant barrier, with the vast majority of educators reporting that they lack the capacity to regularly review and interpret research findings. Even when time is available, the format of academic research often works against its use. Dense language, methodological complexity, and limited accessibility make it difficult for practitioners to quickly identify what matters for their classrooms. This leads educators to prioritize sources that are easier to access and interpret. Blogs, podcasts, and social media are used at significantly higher rates than academic journals, even though educators often view those traditional sources as more credible. In other words, convenience frequently outweighs credibility, not because educators prefer lower-quality information, but because it is usable within the constraints of their daily work. Relevance is the gatekeeper of research use Beyond access, relevance plays a critical role in whether research is used. More than 80 percent of educators report that they are most likely to engage with research that directly connects to their classroom or school context. This aligns with what many practitioners already know intuitively: Research that feels abstract or disconnected from real-world challenges is unlikely to influence practice. The topics educators prioritize, such as social-emotional learning, differentiated instruction, and behavior management, reflect immediate and pressing classroom needs. When research addresses these areas in clear, actionable ways, it is far more likely to be used. When it does not, it becomes another unread article in an already-crowded professional landscape. The format problem: Research isn’t designed for practitioners Perhaps the most actionable finding is not about what research says, but how it is delivered. Educators consistently report a preference for concise, practical formats, infographics, short summaries, videos, and step-by-step implementation guides. Traditional journal articles, while essential for academic rigor, are rarely structured with practitioner use in mind. This is where higher education has an opportunity to rethink its approach. If the goal is to influence practice, research must be translated into forms that align with how educators consume information. This does not mean abandoning rigor. It means adding a second layer of communication–one that prioritizes clarity, brevity, and applicability. The power of professional communities Another key insight is the role of professional relationships in shaping research use. Discussions with colleagues, professional development sessions, and conferences are consistently rated as the most valuable sources of information. These environments allow educators to interpret research collectively, adapt it to their contexts, and build confidence in its application. This suggests that research dissemination should not be viewed as a one-way process. Instead, it should be embedded within collaborative structures where educators can engage with ideas, ask questions, and share experiences. Professional learning communities (PLCs), for example, offer a natural venue for this kind of engagement, yet they are often underutilized as research translation spaces. The missing link: Stronger higher ed–K-12 partnerships Despite the clear need for collaboration, formal partnerships between K-12 schools and higher education institutions remain limited. In the survey, only about one in five administrators reported having a formal relationship with a college or university. This lack of structured collaboration contributes to the disconnect between research and practice. Stronger partnerships could address multiple challenges simultaneously. Universities gain a better understanding of classroom realities, leading to more relevant research questions. Schools gain access to current research and expertise, delivered in ways that support implementation. Most importantly, these partnerships create a feedback loop where research and practice can inform one another. What higher education can do next If higher education institutions want their research to have greater impact, several shifts are necessary: Translate research into usable formats. Every major study should include a practitioner-facing summary with clear implications for practice. Prioritize relevance in research design. Engaging educators in the research process can help ensure that studies address real-world challenges. Embed research into professional learning structures. Partner with schools to integrate research discussions into PLCs and ongoing professional development. Leverage digital platforms strategically. Short-form content, including videos and infographics, can extend the reach of research findings. Build sustained partnerships, not one-off interactions. Long-term collaboration is essential for meaningful impact. Moving from access to application The gap between research and practice is not new, but it is increasingly untenable in a field that relies on evidence-based decision-making. Educators are not asking for more research. They are asking for research that is accessible, relevant, and actionable. Higher education is uniquely positioned to meet this need, but doing so requires a shift in mindset. Research cannot end at publication. It must extend into translation, collaboration, and application. When that happens, research moves from being something educators occasionally consult to something they consistently use, and that is where its true value emerges. This article was based on the survey research originally reported in Bridging the Gap: Simplifying Access to Research for K-12 Educators , Research Issues in Contemporary Education , 10(2), 25-44 by the same authors.

11 May 2026

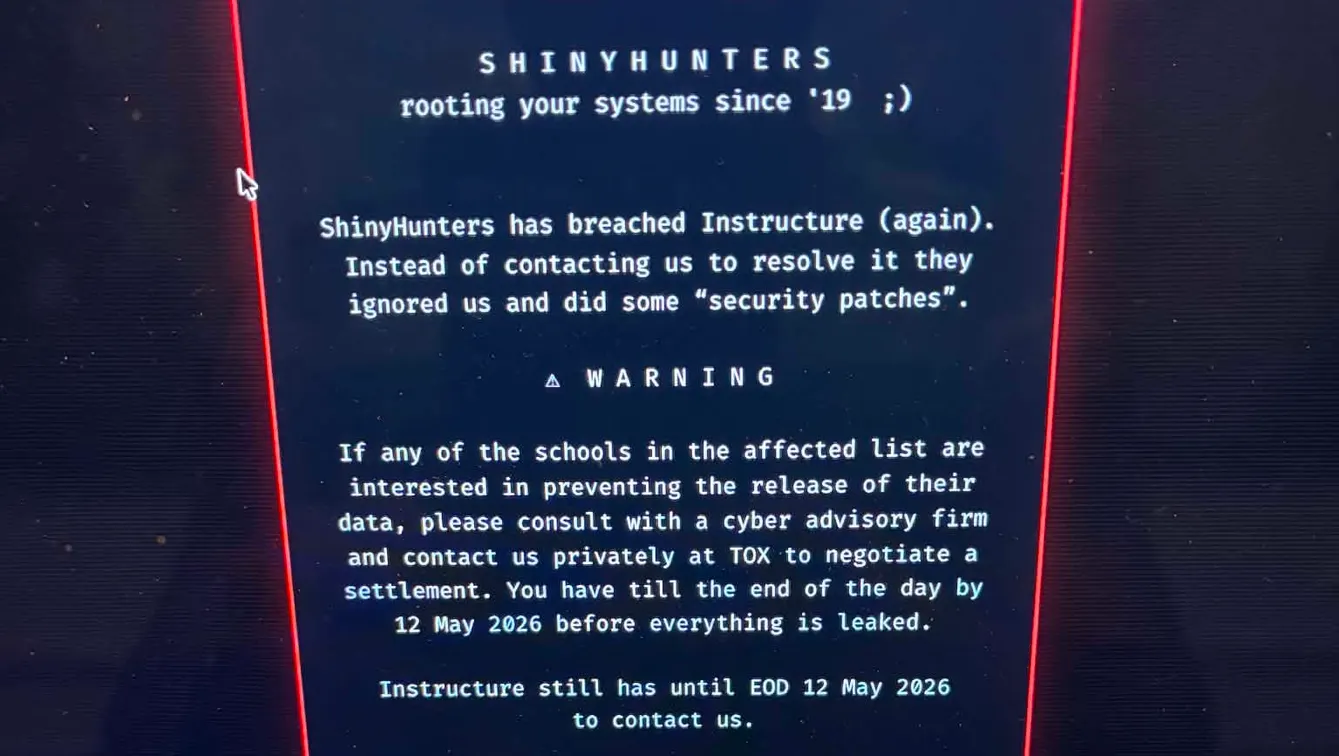

Week In Review: Cyberattacks and federal allegations

Most-clicked story of the week: A recent cybersecurity attack against Instructure exposed certain student information, the ed tech giant confirmed in a May 1 status update on its website. In a subsequent update the following day, the company said it believed the incident had been contained. According to Instructure, the data breach impacted information including messages between users, names, email addresses and student ID numbers, according to Instructure. But, the company said, it wasn’t believed to have compromised any passwords, dates of birth, government identifiers, or financial information as of May 2. While Instructure said it is actively investigating the incident alongside forensics experts, it has not disclosed how many school districts were affected. A second incident targeting Canvas occurred on May 7. Number of the week: 1% The percentage of pending cases with the U.S. Department of Education’s Office for Civil Rights in which resolution agreements were reached in 2025, according to a recent report from the office of Sen. Bernie Sanders , I-Vt. The percentage is the lowest for the office under any presidential administration over the last decade . Allegations abound The National Education Association was accused of antisemitism by the Louis D. Brandeis Center Coalition to Combat Anti-Semitism, according to a complaint filed with the U.S. Equal Employment Opportunity Commission on April 29. The Brandeis Center Coalition filed the charge on behalf of current and former members of the nation’s largest educator union, saying they “have been harmed by the NEA’s discrimination against Jewish members and its toleration and promotion of a hostile environment.” The Education Department’s Office for Civil Rights launched a Title IX investigation into the Los Angeles Unified School Distric t on May 5, alleging one of the nation’s largest districts “appears to be protecting sexual predators at the expense of its students,” according to an agency announcement. The investigation was launched over policies the department said “appear to automatically reassign teachers accused of sexual misconduct with students, including engaging in exploitative ‘romantic relationships,’ to another school.” The district contests the allegations. Physical and digital student safety Roughly 90% of LGBTQ+ youth say recent anti-LGBTQ+ laws, policies and debates have caused them stress or anxiety, according to 2025 data released by The Trevor Project in its annual report tracking LGBTQ+ mental health. Over a third of LGBTQ+ young people reported they seriously considered attempting suicide, while 1 in 10 actually attempted it. School safety can be enhanced by strategies that help students feel connected and supported, according to a recent blog post by the Learning Policy Institute. Research suggests that investments in evidence-based approaches that build a psychological sense of safety for students can help prevent school violence, the blog said. While schools also need physical safety protections, LPI said, some technologies being used in schools lack data on effectiveness and can even lead to harm. A bill that would ban artificial intelligence companions from interacting with children and teens received unanimous approval from the U.S. Senate Judiciary Committee on April 30 and now awaits Senate floor action. The Guidelines for User Age-verification and Responsible Dialogue Act, or the GUARD Act, would require age verification for all users to interact with AI chatbots. Companies with AI chatbots would also face criminal penalties of up to $100,000 per offense if their tools describe or engage in sexually explicit content or encourage or promote physical or sexual violence with someone under the age of 18. In the classroom Some 90% of middle and high school English language arts teachers assigned at least one full book in the 2024-25 school year, with two-thirds saying they planned to assign between one to four full books to their students, according to nationally representative data released by Rand Corp. On average, teachers assigned four full books, and most teachers (60%) assigned more books than required in the curricula. But teachers serving historically marginalized students assigned fewer full books, according to the data. Schools that required students to keep their cellphones in lockable pouches during the school day saw an uptick in suspension rates and a decrease in student well-being in the first year the cellphone policies were implemented. However, those negative effects dissipate in subsequent years, according to new research from the National Bureau of Economic Research. The study found an 80% decline in students’ reported personal cellphone use in classrooms following adoption of cellphone storage policies.

11 May 2026

In an AI-driven world, the most important skills are still human

Across higher education, artificial intelligence is now embedded in everyday academic work, from early research to final drafts. For many students, it has become a default starting point. The urgent question is not whether students use AI, but how they use it—specifically, whether these tools are reinforcing learning or bypassing the cognitive work that leads to it. As AI accelerates core academic tasks, educators are confronting a central challenge: how to preserve depth, judgment and intellectual engagement in an environment optimized for speed. To address this challenge, educators are turning to practical frameworks that help students think more intentionally about their use of AI. Now in its third edition and used by faculty and staff at more than 4,000 colleges, universities and schools across 170 countries , Elon University’s Student Guide to Artificial Intelligence has become a widely adopted, free global resource for AI literacy. The newly released 2026 installment arrives as institutions seek practical ways to help students engage AI thoughtfully. Titled “Human Wisdom for the Age of AI: A Field Guide to Cultivating Essential Skills,” the publication focuses on the habits of mind students need to navigate AI effectively. Published in partnership with the American Association of Colleges and Universities and The Princeton Review , the new edition offers a distinctly human-centered approach. There are engaging and fun exercises, all framed by wise quotes from great thinkers throughout history. The guide is free to download as a PDF and includes teacher’s guide learning modules , with group exercises, worksheets and discussion prompts. There is also an engaging online self-assessment tool that helps students reflect on how they use AI with scores that describe their reliance on these tools. “We are excited to share this hands-on field guide with teachers and learners around the world,” said Elon University President Connie Book. “We must not lose sight of the enduring principles that have always driven human progress. This publication bridges the gap between rapidly expanding algorithmic power and the timeless wisdom of the liberal arts. It empowers students to harness AI technologies where appropriate without sacrificing the empathy, judgment and creative autonomy that only a human mind can provide.” Balancing efficiency with depth AI systems can summarize readings, generate ideas and accelerate tasks that once took hours. Used well, these tools can expand access and spark new forms of creativity. But there is also the temptation to outsource thinking altogether. That reality elevates the importance of foundational human skills: critical thinking, ethical reasoning, creativity and communication. These are the capabilities that allow individuals to question outputs, recognize bias and decide when a machine’s answer is not enough. “As artificial intelligence reshapes how we learn, work and create, the essential skills students need are not disappearing—they are evolving,” said AAC&U President Lynn Pasquerella. “Capacities such as critical inquiry, ethical reasoning, creativity and communication are more important than ever because they enable students to engage AI thoughtfully, question its outputs and apply knowledge with judgment and purpose. This guide underscores a central truth: in an age of increasingly powerful machines, the learning outcomes of a liberal education are the foundation for meaningful and responsible innovation.” From awareness to intention One of the most meaningful shifts underway is behavioral. Students are moving from simply using AI to thinking more deliberately about how and why they use it. That might mean pausing to ask: What am I trying to learn here? Is this tool helping me engage more deeply—or avoid that effort? Where should my own judgment take the lead? Educators are increasingly embedding these questions into coursework, helping students build the discernment needed to navigate an evolving digital landscape while preserving the deeper purpose of education. At the same time, AI presents an opportunity to reaffirm what has always mattered: cultivating thoughtful, curious and creative individuals who can navigate complexity with care and intention. “Through our research at The Princeton Review, we consistently see that students are both excited by AI and uncertain about how to use it well,” said Editor-in-Chief Rob Franek. “What they’re really looking for is guidance. This field guide meets that moment by translating big ideas—like critical thinking, creativity and ethical decision-making—into practical habits students can use every day.” As with previous editions, colleges and universities are encouraged to download and distribute the “Human Wisdom for the Age of AI” field guide on their campuses, and faculty may use and adapt the content under the terms of a Creative Commons license. All the resources of this guide are available at: studentguidetoAI.org .

11 May 2026

Zedbud Launches Unified Communications And Learning Platform

The platform unifies school communication, student collaboration, and family engagement in one secure, FERPA-compliant environment designed for real classrooms, real families, and real district oversight. This post was first published on eLearning Industry .

11 May 2026

How Higher Ed Can Innovate on AI Without Sacrificing Privacy

Recorded live at the 2026 EDUCAUSE Cybersecurity and Privacy Professionals Conference, Sophie and Jenay talk with Ben Archer and Michael Tran Duff about frameworks for supporting innovation in higher education while protecting data privacy in the age of AI.

11 May 2026

How Higher Education Is Responding to the Canvas LMS Incident and Preparing for What's Next

A recent ransomware attack on Instructure's Canvas LMS has raised concerns across the higher education community about cybersecurity, data privacy, third-party risk, and institutional preparedness. More than 950 EDUCAUSE community members joined an EDUCAUSE QuickTalk webinar to discuss campus impacts, share questions, and explore how institutions are responding.

11 May 2026

From AI Promise To Capability: What L&D Teams Need To Close The Skills Gap [eBook Launch]

Want practical insights into workplace learning, key trends, and real research to guide your organization in building real capabilities? Check out this new guide from TalentLMS. This post was first published on eLearning Industry .

10 May 2026

Egypt’s new vision: Education at core of economic competitiveness

Education is becoming one of the defining pillars of global competitiveness at a time when technology and artificial intelligence are rapidly reshaping economies and labour markets worldwide. This was the central message delivered by Minister of Education and Technical Education Mohamed Abdel Latif during a conference titled “Education Conference: Investing in Shaping the Future of Education in Egypt” organised by the American Chamber of Commerce in Egypt (AmCham Egypt) on Sunday. The minister stressed that education can no longer be separated from economic development, particularly as nearly one million young Egyptians enter the labour market each year. In this context, investment in education is not merely a social responsibility, but a strategic economic necessity aimed at preparing future generations for an increasingly competitive global economy. Abdel Latif explained that technological advancement has become a key driver of economic growth, making it essential for educational systems to adapt quickly to changing labour market demands. He warned that when education fails to generate meaningful employment opportunities, the consequences extend beyond the economy to affect social stability and human development. He therefore underscored the importance of aligning education more closely with labour market needs while integrating technology, digital learning tools, and artificial intelligence into classrooms and vocational training programmes. The minister also highlighted the need for stronger cooperation between the government and the private sector in modernising Egypt’s education system. With a population exceeding 100 million and nearly 30 million students enrolled across different educational stages, he said the scale of the challenge requires coordinated efforts to enhance skills development, improve educational quality, and expand access to modern technologies. Public-Private Partnership as a Catalyst for Reform Discussions during the conference reflected broad consensus that sustainable educational reform cannot be achieved by the government alone. Hossam Badrawi, education expert and chairperson of the Badrawi Foundation, said the most dangerous form of poverty is not the lack of money, but the lack of opportunity. According to him, education remains the most powerful tool for individuals and nations to compete, grow, and build stronger societies. Badrawi argued that developing knowledge and human capabilities requires an effective partnership between the public and private sectors. He noted that Egyptians deserve a genuine educational transformation built on investment in knowledge, values, awareness, and practical skills. Hossam Badrawi, education expert and chairperson of the Badrawi Foundation Reflecting on his experience leading a committee of 70 experts contributing to Egypt Vision 2030, he said education must be treated as a national development project capable of producing generations equipped with innovation, critical thinking, and the ability to contribute meaningfully to society. He also stressed the importance of maintaining consistent educational policies and ensuring sufficient investment in the sector. Referring to the constitutional allocation of 6% of national income to education, Badrawi described educational spending as a necessity rather than a luxury, while calling for stronger oversight of both funding levels and spending mechanisms. For his part, AmCham Egypt Chairperson Omar Mehanna said education is no longer simply a social issue, but a strategic economic priority in a world increasingly shaped by technology, artificial intelligence, and global competition. He noted that investment should extend beyond infrastructure to include improving educational quality, fostering innovation, and strengthening students’ ability to compete internationally. The conference brought together policymakers, educators, and business leaders to exchange ideas on expanding private-sector participation in education and exploring opportunities within Egypt’s rapidly growing education market. Participants agreed that bridging the gap between education and labour market requirements has become an urgent priority, particularly as industries increasingly demand advanced technological and practical skills. During the opening session, Sylvia Menassa, CEO of AmCham Egypt, highlighted the importance of investment in education as a key driver of student development and economic growth. Meanwhile, Ahmed Wahby and Sarah El Kala pointed to the significant opportunities within Egypt’s education sector while stressing the need to narrow the gap between academic learning and labour market demands. The discussions ultimately reflected a shared understanding that Egypt’s economic future will depend heavily on building a modern, flexible, and competitive education system capable of preparing younger generations for the demands of a rapidly evolving global economy. The post Egypt’s new vision: Education at core of economic competitiveness first appeared on Dailynewsegypt .

10 May 2026

Cloud Vs. On-Premise LMS: Which One Is Secretly Bleeding Your Budget?

Choosing the right LMS hosting model isn't just technical—it directly impacts your cost, scalability, control, and long-term effort. Cloud LMS offers speed, flexibility, and low maintenance, making it ideal for growing and remote teams. This post was first published on eLearning Industry .

10 May 2026

ChatGPT vượt điểm thí sinh thật trong kỳ thi đầu vào đại học top đầu

NHẬT BẢN - Các mô hình AI đã vượt qua mức điểm cao nhất từng có một thí sinh đạt được trong kỳ thi tuyển sinh năm nay của Đại học Tokyo.

9 May 2026

Hot Off The Virtual Press: The Book Of Allenisms

Want to find out the 35+ principles that can help you elevate your eLearning design? Download The Book Of Allenisms for key insights from seasoned industry pros. This post was first published on eLearning Industry .

9 May 2026

$5K Sanctions for Repeated Mis-Citation in Coomer v. Lindell / My Pillow Election-Related Libel Suit

From Thursday's decision by Judge Nina Wang (D. Colo.) in Coomer v. Lindell : This is a defamation case brought by Plaintiff Eric Coomer ("Plaintiff" or "Dr. Coomer") over accusations that he used his position at Dominion Voting Systems to interfere with the results of the 2020 presidential election. The case went to trial, and the jury delivered a partial verdict for Plaintiff. The verdict included a punitive damages award against Frankspeech. [A.] The First Order to Show Cause and Sanctions Order Before trial, Plaintiff filed a motion in limine. Defendants then filed a response brief that included "nearly thirty defective citations." … After questioning from the Court at the Final Pretrial/Trial Preparation Conference ("Pretrial Conference"), Mr. Kachouroff eventually admitted that he had used artificial intelligence ("AI") in drafting the response brief. He also represented that he had delegated citation checking for the brief to his co-counsel, Jennifer DeMaster ("Ms. DeMaster")…. The Court concluded that a $3,000 sanction on Mr. Kachouroff and his law firm and a $3,000 sanction on Ms. DeMaster was the "least severe sanction adequate to deter and punish defense counsel in this instance." The Court declined to extend the sanction to Defendants themselves. [B.] The Second Order to Show Cause After trial, and after the Court's first sanctions order, the Parties submitted their post-trial motions. Plaintiff moved to increase the punitive damages award against Frankspeech, pursuant to Colorado law. In relevant part, Frankspeech's response brief ("Response") argued that such an award would violate the Reexamination Clause of the Seventh Amendment. The brief stated, "The 10th Circuit recognized in Capital Solutions, LLC v. Konica Minolta Business Solutions USA, Inc. , 695 F.Supp.2d 1149, 1154-56 (10th Cir. 2010), that the jury's determination on this issue [i.e., the amount of punitive damages] is entitled to finality." In its Order on Post-Trial Motions, the Court observed that the Capital Solutions citation is defective for two reasons. First, Capital Solutions is a district court decision, even though Frankspeech erroneously referred to it as a Tenth Circuit case. Second, Capital Solutions does not support the proposition that a jury's determination of the amount of punitive damages is "entitled to finality" under the Reexamination Clause. The Court explained that a reasonable review should have alerted defense counsel to this mistake. And given that counsel had already been sanctioned for "this exact type of error," the Court ordered Mr. Kachouroff, Ms. DeMaster, and Frankspeech to show cause why they should not be sanctioned again under Rule 11…. [C.] Violation of Rule 11 Mr. Kachouroff concedes that he made a "real error" in both his description of Capital Solutions 's holding and his reference to it as a Tenth Circuit decision. Although he does not know why the error occurred, he asserts that Capital Solutions 's discussion of Tenth Circuit precedent is on point. He claims that he cite-checked the brief and did not use AI other than Westlaw for legal research. Finally, he argues that sanctions are unwarranted because the error was minor and "the legal principle for which [he] cited Capital Solutions is good law." The Court has a significant amount of skepticism that the Capital Solutions misrepresentation resulted only from human error. True, the Court could write off one erroneous reference to the Tenth Circuit as a typographical error. But the Response makes the same obvious mistake twice in quick succession: "The 10th Circuit recognized in Capital Solutions, LLC v. Konica Minolta Business Solutions USA, Inc. , 695 F. Supp. 2d 1149, 1154–56 ( 10th Cir. 2010)…." And this type of misattribution error—deploying an otherwise correct citation but attributing it to the wrong court—is a form of hallucination that has already occurred in this case. For instance, in the brief that previously led to sanctions, Mr. Kachouroff claimed that the "District of Colorado" had addressed an issue, citing " Ginter v. Northwestern Mut. Life Ins. Co. , 576 F. Supp. 627, 630 (D. Colo. 1984)" in support. While the case name and reporter for Ginter are correct, it was not issued by a court in this District. See Ginter v. Nw. Mut. Life. Ins. Co ., 576 F. Supp. 627, 630 (E.D. Ky. 1984). Similarly, Mr. Kachouroff's prior brief asserted that "[t]he Tenth Circuit…specifically addressed" a certain evidentiary issue in " United States v. Hassan ," with a citation to " Hassan , 742 F.3d 104, 133 (10th Cir. 2014)." But Hassan is a Fourth Circuit decision. See United States v. Hassan , 742 F.3d 104, 133 (4th Cir. 2014). Viewed against this backdrop, the nature of the errors in the Response suggests that this is not the kind of mistake a human attorney would make. Furthermore, Mr. Kachouroff's statements to the Court in this case do not inspire confidence. He currently attests, "I did not use any Generative Artificial Intelligence program to create Document 404 with the exception of Westlaw which I used solely for the purpose of legal research." But Mr. Kachouroff has previously represented that he "routinely" uses AI tools to prepare his arguments. And strikingly, despite adamantly attesting in response to the First Order to Show Cause that "I do not rely on AI to do legal research or find cases," Mr. Kachouroff now admits that " the circumstances at issue here are not, respectfully, the same type of AI generated error in a draft pleading that was the subject of that earlier sanction." The Court is also unimpressed by Mr. Kachouroff's attempts to minimize his conduct. The Capital Solutions citation is located prominently in the Response; it is the only citation in the first paragraph of the first page of the brief. And as the Court previously explained, the citation error is obvious. Any lawyer—especially one of Mr. Kachouroff's experience—would or should recognize that a case reported in the Federal Supplement is from a district court, not a circuit court. This error would be apparent upon even a brief inspection of the first page of the Response. The obviousness of the error alone indicates that Mr. Kachouroff failed to reasonably review the Response before filing it. Regardless of whether or not generative AI was used, this is not the type of error a seasoned attorney would or should make. In and of itself, falsely citing a district court case as binding authority is a "material" error. This Court is bound by published Tenth Circuit and Supreme Court opinions, not the decisions of other district courts. By holding out Capital Solutions as a Tenth Circuit decision, Mr. Kachouroff misrepresented the "legal significance" of its holding. Yet Mr. Kachouroff asks the Court to overlook the "citation error" because his "description of Capital Solutions did not involve the assertion of an unsupported legal proposition." The Court respectfully disagrees. The Response misstates both Capital Solutions 's holding and the applicable Seventh Amendment law. In relevant part, the Response asserts, "Among the issues historically committed to the jury is the amount of punitive damages—a factual question within the meaning of the Seventh Amendment's Reexamination Clause. The 10th Circuit recognized in Capital Solutions …that the jury's determination on this issue is entitled to finality." The Court has already explained why this description of Capital Solutions 's holding is misleading …. Mr. Kachouroff suggests that he merely gave an "imprecise description of the case's procedural posture." But Capital Solutions 's holding was inextricably intertwined with its procedural posture; whether a jury must decide the amount of punitive damages in the first instance was the substantive constitutional question before the court. And Mr. Kachouroff still fails to acknowledge that Capital Solutions turned on the Seventh Amendment's "trial by jury" clause, not the Reexamination Clause. This distinction matters because Supreme Court precedent contravenes Mr. Kachouroff's assertion that "the amount of punitive damages" is a "factual question" on which the jury's verdict is protected by the Reexamination Clause. As this Court pointed out, the Supreme Court has held that "the level of punitive damages is not really a 'fact' 'tried' by the jury," so judicial review of the amount of an award does not offend the Reexamination Clause. Capital Solutions addressed Cooper Industries and expressly disclaimed any reliance on the Reexamination Clause. But despite relying on Capital Solutions and other cases analyzed in that opinion, the Response still cited Capital Solutions in support of the argument that the Reexamination Clause "entitle[s]" a jury's punitive damages award "to finality." Even after the Court specifically noted Cooper Industries in its Order on Post-Trial Motions, Mr. Kachouroff maintains that Capital Solutions "support[s] the proposition I made" in the Response. That is simply not true…. This is the latest incident in what is now a pattern of Mr. Kachouroff submitting briefs with citations that "misrepresent[ ] what courts have said." … The judiciary undermines its own central purpose of administering justice for the public good—and the public's confidence in the institution—when it permits attorneys to breach their duties, including diligence and candor, owed to the court and the public without consequence…. Having reviewed the entirety of the record, the Court finds that an additional, moderately increased monetary sanction of $5,000 is sufficient to deter Mr. Kachouroff and similarly situated individuals from engaging in this conduct. The Court will not refer Mr. Kachouroff to the Virginia Bar for disciplinary proceedings. In doing so, the Court specifically relies on Mr. Kachouroff's representation that he has stepped back from "active trial-level litigation other than matters necessary to conclude existing obligations which include the present show-cause proceedings and limited local-counsel responsibilities in one remaining case. I do not intend to return to trial work which I have done for the past 25 years," due to health issues. {Counsel has filed a Motion for Protec[ti]ve Order to Restrict Public Access ("Motion to Restrict"), seeking restriction of a Second Affidavit submitted by Mr. Kachouroff detailing specific health issues…. This Court agrees that Mr. Kachouroff's private health information is appropriately restricted from public access but respectfully disagrees that the public has no justiciable interest in other statements contained in the Second Affidavit, given Mr. Kachouroff's reliance on that information to support his Response to the Second Order to Show Cause. Accordingly, the Court ORDERS Mr. Kachouroff to file a publicly accessible version of his Second Affidavit, with only his private health information redacted…. The post $5K Sanctions for Repeated Mis-Citation in <i>Coomer v. Lindell / My Pillow</i> Election-Related Libel Suit appeared first on Reason.com .

9 May 2026

The Future Of Corporate Learning: Data, AI, And Adaptive Experiences

Corporate learning is shifting to data-driven, AI-powered models that personalize training, improve engagement, and prepare employees for future skills. This post was first published on eLearning Industry .

9 May 2026

Nicholas Burbules: “En vez de prohibir la IA, tenemos que dar a los estudiantes mejores razones para aprender”

Desde hace más de 25 años –antes de ChatGPT, del smartphone y de las redes sociales–, Nicholas Burbules viene reflexionando sobre las promesas y riesgos de las “nuevas” tecnologías en la educación, así como sobre el concepto de “ aprendizaje ubicuo ”: la posibilidad de aprender en cualquier momento y lugar gracias a los dispositivos digitales. Burbules es doctor en Filosofía de la Educación por la Universidad de Stanford y profesor emérito de la Universidad de Illinois , en Estados Unidos. Estuvo en Argentina para participar del VII Seminario de Innovación Educativa de Ticmas en la Feria del Libro . En diálogo con Infobae , analizó el impacto educativo de la inteligencia artificial y sostuvo que, si el objetivo es evitar que los estudiantes deleguen procesos formativos esenciales en la tecnología, la clave es reconstruir la motivación por aprender . –En 2001 publicó un libro de referencia sobre los “riesgos y promesas” de las nuevas tecnologías en educación. A 25 años, ¿cuáles cree que son hoy los riesgos y las promesas? –Cuando escribí mi libro hace más de 25 años, veía en internet y en las redes sociales una oportunidad extraordinaria para conectar personas, construir comunidades y ampliar el acceso a la información . Ese potencial sigue existiendo, pero hoy también vemos con mucha más claridad sus efectos negativos: adicción, polarización política, deterioro de la conversación democrática y dificultades crecientes para dialogar con quienes piensan distinto. Las redes sociales , impulsadas por algoritmos diseñados para mantener nuestra atención, muchas veces no nos conectan con la diversidad, sino que refuerzan nuestras propias creencias. Creo que, en retrospectiva, estamos entendiendo que algunas de estas herramientas rompieron dinámicas sociales fundamentales que quizás no sean fáciles de reparar. La historia de la tecnología educativa ha estado marcada muchas veces por promesas exageradas o incompletas : herramientas que ofrecen beneficios reales, pero que también introducen consecuencias no previstas. Recuerdo que hace unos 20 años discutía con funcionarios del Ministerio de Educación sobre el uso de celulares en las escuelas . En ese momento, yo defendía su presencia en el aula, porque veía a los teléfonos como herramientas de aprendizaje. Ese debate sigue vigente hoy, pero el contexto cambió profundamente . En aquel momento, el impacto de las redes sociales todavía era muy incipiente; hoy forman parte constante y omnipresente de la vida cotidiana de los jóvenes. Y además sabemos mucho más sobre su carácter adictivo . Cada vez entendemos mejor que las redes sociales y los algoritmos que las sostienen están diseñados para captar y retener la atención . Su lógica consiste en mantener a las personas conectadas el mayor tiempo posible, ofreciéndoles contenidos que refuercen sus intereses, deseos o inseguridades. En el caso de los jóvenes, esto tiene consecuencias profundas. Ya no se trata solo de distracción en el aula, sino de una relación psicológica y social mucho más compleja con la tecnología . Recuerdo el testimonio de una adolescente que decía que una experiencia solo se volvía “real” para ella una vez que la había compartido online. Eso muestra hasta qué punto estas plataformas pueden moldear la percepción misma de la realidad . También vemos efectos preocupantes sobre la autoestima, la imagen corporal y la necesidad constante de validación social , especialmente entre adolescentes. Muchos jóvenes internalizan estándares irreales sobre apariencia, popularidad o aceptación. Nada de esto parecía formar parte de las promesas originales de estas tecnologías. –Muchos sistemas educativos están prohibiendo los celulares en la escuela. ¿Es una respuesta razonable para preservar el aprendizaje o una forma de negar un cambio estructural? –Lo que puedo decir es que hoy veo este problema de manera diferente a como lo veía hace veinte años . Sigo creyendo que los celulares pueden ser herramientas educativas valiosas y que, bien utilizados, pueden enriquecer el aprendizaje. Sin embargo, también es evidente que un aula en la que treinta estudiantes están mirando sus teléfonos no es un entorno donde el aprendizaje pueda desarrollarse de manera efectiva. Por eso, no necesariamente plantearía una prohibición absoluta, pero sí creo que su uso debería estar mucho más supervisado y regulado . Puede haber momentos o propósitos pedagógicos específicos para utilizarlos, pero eso es muy distinto a permitir un acceso irrestricto en el que los estudiantes simplemente naveguen por redes sociales durante la clase. El problema es que, como ocurre con muchas tecnologías, resulta difícil conservar sus beneficios sin exponerse también a sus efectos negativos . Y hoy esos efectos negativos –especialmente los vinculados a la adicción a redes sociales– son cada vez más evidentes y preocupantes: distracción, problemas de autoestima, dificultades de concentración y otros impactos psicológicos y sociales. Más que una discusión tecnológica, estamos frente a un debate mucho más amplio , que hoy se está dando en casi todo el mundo. –Uno de sus aportes más conocidos es la noción de “aprendizaje ubicuo”, la idea de que hoy el aprendizaje sucede en todas partes. Pero no todo consumo de información en el teléfono implica aprendizaje. ¿Cuándo podemos hablar de “aprendizaje” ubicuo en sentido estricto? –Vengo pensando y escribiendo sobre el aprendizaje ubicuo desde hace más de veinte años. Cuando empecé a trabajar sobre esta idea, resultaba bastante innovadora: la posibilidad de aprender en cualquier momento y en cualquier lugar gracias a que llevamos internet en el bolsillo. En aquel momento sonaba como una transformación radical; hoy simplemente describe nuestra realidad cotidiana . Acceder a información –buscar una fecha, verificar un dato, recordar el nombre de una película o resolver una duda puntual mediante Google– es una forma de aprendizaje, pero superficial . Aprendemos algo que antes no sabíamos, sí, pero eso representa una concepción bastante limitada del aprendizaje. Una idea más profunda implica comprensión , pensamiento crítico y procesos cognitivos más complejos. No se trata solo de incorporar datos, sino de desarrollar capacidades más elaboradas. Por eso distingo entre “ aprender qué ” y “ aprender cómo ”. Podemos aprender información , pero también podemos aprender habilidades : cómo reparar algo en casa, cómo escribir mejor, cómo entrevistar, cómo resolver problemas. El aprendizaje ubicuo puede abarcar ambas dimensiones: puedo usar mi teléfono para encontrar información puntual, o para adquirir una nueva habilidad práctica. –¿Cómo cambia esta noción de “aprendizaje ubicuo” a partir de la irrupción de la IA? –Cuando incorporamos la inteligencia artificial, el panorama se vuelve más complejo. La IA es muy eficaz para ayudarnos a acceder rápidamente a información, es decir, para aprender ciertos datos o contenidos. Pero es menos eficaz cuando se trata de ayudarnos a desarrollar habilidades más profundas , como el pensamiento crítico. Puede ser útil para resolver problemas concretos o guiarnos en determinadas tareas, pero no necesariamente nos enseña a pensar mejor. De hecho, uno de los principales riesgos es que las personas deleguen demasiado en la IA y reduzcan su propio esfuerzo intelectual. Muchas veces, los usuarios reciben una respuesta generada por IA y la aceptan como definitiva, sin cuestionar suficientemente sus fuentes, su precisión o su confiabilidad. Pero la IA no reemplaza nuestra responsabilidad de evaluar críticamente la información . Sigue siendo esencial que las personas analicen, contrasten y profundicen por sí mismas. La gran promesa de la IA es que puede ampliar nuestras capacidades . Pero su gran riesgo es que, si dejamos que haga demasiado por nosotros, podemos debilitar procesos fundamentales de aprendizaje . Esto se vuelve particularmente evidente en habilidades como la escritura . La IA puede generar textos de manera muy eficiente, pero generar texto no es lo mismo que aprender a escribir . Escribir implica desarrollar pensamiento, estructura, argumentación y voz propia. Si una herramienta hace todo ese trabajo por nosotros, obtenemos un producto, pero no desarrollamos la capacidad. Como ocurrió en su momento con las calculadoras , algunas tareas pueden transformarse porque la tecnología facilita su ejecución. Pero hay habilidades –como escribir , pensar críticamente o argumentar – que sería peligroso externalizar por completo. –¿Le parece viable la posibilidad de prohibir el uso de IA en educación? –Hoy, tanto las escuelas como las universidades están enfrentando este problema de un modo similar a lo que ocurrió con los celulares. Para muchos, la respuesta inmediata es prohibir : decir que no se puede usar inteligencia artificial para escribir trabajos, controlar lo que entregan los estudiantes y sancionar su uso como una forma de fraude. Entiendo de dónde surge esa reacción, pero no estoy seguro de que sea la respuesta adecuada . Los estudiantes van a usar estas herramientas de todos modos, y con el tiempo encontrarán formas cada vez más sofisticadas de hacerlo sin ser detectados. El problema de fondo es otro. En lugar de centrarnos solo en la prohibición, deberíamos ayudar a los estudiantes a hacerse preguntas más profundas : por qué están estudiando, qué sentido tiene una tarea, qué esperan aprender de ella. Si la mejor respuesta que un estudiante puede dar es “hago esto para entregarlo y obtener una buena nota”, entonces hemos fallado como educadores. Porque el objetivo de una tarea no es la calificación, sino el aprendizaje . Y hay cosas que solo se aprenden haciendo el trabajo por uno mismo. La escritura es un buen ejemplo: uno aprende a escribir escribiendo . Si delega completamente ese proceso en una herramienta, puede obtener un resultado , pero no desarrolla la habilidad . Creo que, en parte, hemos contribuido a este problema al poner demasiado énfasis en los resultados –fechas de entrega, calificaciones, evaluaciones– y no lo suficiente en el proceso de aprendizaje . Deberíamos poder explicar con mayor claridad qué es lo que un estudiante puede aprender al hacer una tarea por sí mismo y por qué vale la pena hacerlo. En vez de prohibir la IA, tenemos que dar a los estudiantes mejores razones para aprender . Si no logramos construir ese sentido, ellos simplemente optarán por el camino más fácil. Ahí el aprendizaje se pierde. –En la universidad se supone que los estudiantes eligen, pero en la escuela no: muchas veces deben aprender cuestiones que no les interesan pero que se consideran valiosas. ¿Cree que en la educación obligatoria los profesores deberían dedicar más tiempo a trabajar sobre la motivación y el sentido? –La respuesta corta es sí. Para mí, una de las preguntas centrales en cualquier nivel educativo es qué motiva realmente a aprender. Como filósofo, pienso mucho en la motivación intrínseca : el placer, la curiosidad y la emoción de descubrir algo nuevo. Los niños pequeños suelen tener eso de forma natural. Basta ver el entusiasmo con el que quieren entender cómo funciona el mundo. Pero, lamentablemente, para muchos esa motivación comienza a debilitarse cuando ingresan a la escuela. Muchas veces, la escuela desplaza esa curiosidad natural hacia motivaciones externas : cumplir con una tarea, obtener una nota o aprobar un examen. Y con demasiada frecuencia, la respuesta a por qué algo debe aprenderse termina siendo simplemente: “ Porque va a estar en la prueba ”. Eso representa una respuesta muy pobre al problema de la motivación. Creo que los docentes pueden hacer mucho más para explicar por qué lo que enseñan es importante, interesante o valioso . No solo qué hay que hacer, sino por qué merece la pena hacerlo. Cuando la escuela se enfoca excesivamente en la evaluación y el rendimiento , corre el riesgo de erosionar la curiosidad que originalmente impulsa el aprendizaje. Además, los sistemas basados en recompensas externas suelen funcionar mejor para quienes ya tienen éxito académico, pero dejan atrás a muchos otros estudiantes, que terminan sintiendo que el esfuerzo no tiene sentido para ellos. El desafío es mucho más profundo que mejorar contenidos o resultados: se trata de reconstruir el sentido mismo del aprendizaje . –¿Cómo se puede dar más lugar a la motivación de los estudiantes sin renunciar a que también puedan aprender aquello que no los motiva? –Hoy vemos que los jóvenes sienten entusiasmo, curiosidad y compromiso por muchas de las actividades que realizan en sus teléfonos . En lugar de ignorar eso, deberíamos preguntarnos cómo aprovechar esos intereses como motores para el aprendizaje. Por supuesto, esto plantea desafíos : cómo utilizar esas herramientas sin quedar atrapados en la distracción, la lógica adictiva o el encierro en burbujas algorítmicas. Además, la educación tiene la responsabilidad de ampliar horizontes , de abrir mundos que inicialmente quizás no resulten atractivos para los estudiantes. No se trata solo de seguir sus intereses inmediatos, sino de utilizarlos como punto de partida para expandir su experiencia. Otro elemento fundamental es la dimensión social . Sabemos que los jóvenes están profundamente motivados por la interacción con otros , por el reconocimiento de sus pares y por la participación colectiva. Esa energía social puede convertirse en un recurso educativo extraordinario. Sin embargo, a medida que avanzan en el sistema educativo, el aprendizaje suele volverse cada vez más individualizado , y muchas veces desaprovechamos ese potencial social. Creo que allí hay una limitación importante de nuestra imaginación educativa: sabemos qué motiva a los estudiantes, pero con frecuencia organizamos la enseñanza como si eso no importara. Tal vez deberíamos aprovechar mucho más esos intereses, vínculos y formas de participación para impulsar aprendizajes significativos. Porque decir simplemente “esto es importante y debés aprenderlo, te interese o no” difícilmente sea una fórmula exitosa para educar. –Se ha escrito mucho sobre las “habilidades del siglo XXI”. A partir de la irrupción de la IA, ¿qué habilidades cree que siguen siendo irrenunciables en la formación de los estudiantes? –Creo que las llamadas cuatro C – creatividad, comunicación, pensamiento crítico y colaboración – siguen siendo centrales en la educación contemporánea. Y lo más importante es que estas habilidades no pueden desarrollarse delegándolas en la inteligencia artificial. La IA puede ayudar a estructurar experiencias de aprendizaje, ofrecer apoyo o facilitar ciertos procesos, pero no puede reemplazar el ejercicio mismo de esas capacidades. Uno aprende creatividad creando. Aprende pensamiento crítico pensando críticamente. Aprende a escribir escribiendo. Aprende colaboración colaborando. Ese es uno de los grandes desafíos actuales: si la IA realiza el trabajo por nosotros , podemos obtener un resultado, pero perdemos el beneficio formativo del proceso . El aprendizaje, en muchos sentidos, se parece al ejercicio físico . Desarrollamos nuestras capacidades mentales, intelectuales e imaginativas mediante la práctica sostenida , incluso cuando es difícil, lenta o repetitiva. Por supuesto, la IA vuelve muy tentador elegir el camino más rápido y fácil . Y esa tentación es comprensible. Pero justamente allí reside uno de los mayores retos para los educadores: construir razones más sólidas que la simple prohibición para que los estudiantes quieran recorrer el camino más exigente del aprendizaje real. Porque prohibir, por sí solo, rara vez funciona a largo plazo. Tenemos que lograr que los estudiantes internalicen el valor del aprendizaje , comprendan por qué ciertas habilidades importan y por qué algunas capacidades solo pueden desarrollarse mediante el esfuerzo personal. No todo lo valioso en educación es fácil o inmediato. Aprender profundamente requiere tiempo, práctica y compromiso.

9 May 2026

LGBTQ+ Youth Mental Health Is Suffering, but Schools Are Poised to Help

Bullying. Isolation. Stress. Everyone experiences these on the journey from adolescence to adulthood, but new data on the mental health of LGBTQ+ youth shows the additional pressures they face increases their risk of suicide compared to their peers. The Trevor Project, a nonprofit focused on suicide prevention for LGBTQ+ youth, has released its most recent survey of 16,000 LGBTQ+ young people 13 to 24. Among the most concerning figures was one in 10 participants reporting that they had attempted suicide during the previous year. And more than one-third seriously considered suicide. Experts also tell EdSurge that the strain of mental health issues and unwelcoming school settings directly harm students’ ability to thrive in, or even attend, their classes. Despite the sobering results of the survey, the data also reveals solutions — including a role for schools. “One of the most important findings is that when adults, institutions, and communities become more affirming, the suicide risk of LGBTQ+ young people goes down,” Ronita Nath, the Trevor Project’s vice president of research, says. “Schools play a life-saving support by creating environments where LGBTQ+ young people feel safe, accepted and supported.” Feeling the Pressure With 2026 on track to be another record-breaking year for anti-LGBTQ+ bills introduced at the state and federal levels, a vast majority of survey respondents said they felt stressed, anxious or unsafe due to the policies and the debates surrounding them. When those young people are caught in the crossfire of heated political debates, Nath says the negative rhetoric that trickles down has real consequences. Youth who reported experiencing victimization due to their gender identity or sexual orientation — like bullying, physical harm or exposure to conversion therapy — were three times as likely to attempt suicide as their peers. Those risks dropped among survey participants who said their school affirmed their identity. Support can look like adopting curriculum that counters anti-LGBTQ+ bias and increasing access to mental health services. Forty-four percent of survey participants said they couldn’t access the mental health services they needed. Some of the barriers to those services were tangible, like not being able to afford transportation to see a counselor. But many were not: they cited fear of their mental health problems not being taken seriously, not being understood by a mental healthcare provider, or past negative experiences that made young people hesitant to seek services again. Nath encouraged schools to offer gender and sexuality alliances (GSAs), ensure anti-harassment policies were in place and provide professional development for educators to help ease students’ discomfort. “We know [that] not only improves mental health and well-being for LGBTQ+ youth, but for all their peers,” she says. Strain on School Success Research shows that well-being, engagement and a sense of belonging go hand-in-hand with students’ ability to thrive in school, according to Megan Pacheco, executive director of Challenge Success. The group is a nonprofit focused on increasing student well-being, engagement and belonging that’s based in Stanford’s Graduate School of Education. The stress that gender-diverse students — including transgender, non-binary and gender-queer youth — experience can become an obstacle to their academic success. If they feel their identity is threatened or lack a sense of belonging, Pacheco says, they’re less likely to reach out for help. “It's going to affect their participation, how they show up in the classroom, and it's going to affect their well-being,” she says. Challenge Success’ large trove of survey data on the school experiences of middle and high school students reveals that students who identify as transgender, non-binary or gender diverse report more stress than their peers who identify as boys and girls, says Sarah Miles, director of research for Challenge Success. “Instead of two or three sources of stress — family pressure, or peer relationships, or social media — it is just all the above,” Miles says. “In order to be able to function, use your working memory, be present, be engaged … if you have all those things on board that you're worrying about, you're just not able to attend to school in the same way.” Among LGBTQ+ youth who are in school, about 85 percent said they had at least one adult at school who is affirming of their identity, according to the Trevor Project data. More than half of respondents said school was an affirming place, second to online spaces. Matthew Rice, who chairs the science department at a New Jersey high school, tells EdSurge that students don’t judge safety by a school’s mission statement — they judge it by how adults respond to situations like harassing comments made in the hallway, classroom jokes, pronoun use and whether discipline is applied consistently among varying groups of students. Rice has published research on the experiences of transgender and nonbinary educators, but the overall lessons gleaned from his work apply to students as well. “Students notice who is allowed to exist authentically in schools,” Rice said via email. “Representation is not symbolic: It changes students’ perception of what futures are possible and who belongs in intellectual spaces. For many students, the first openly LGBTQ+ adult they meet is an adult at school.” When it comes to supporting gender-diverse students, Miles of Challenge Success says she wants to dispel the belief that helping them thrive is a zero-sum game. “I think there's sometimes a misconception that if we give these students support, then other students aren't getting support,” she says. “What's really important is that, by giving students who identify as gender diverse support, everyone benefits, because all students then feel safe to show up — whatever their identities.”

8 May 2026

A Cyberattack on Canvas Could Cause Lasting Aftershocks for Schools

Data from millions of students might have been compromised.

8 May 2026

A District Expects to Save $200K From AI-Powered 'Vibe Coding.' Here's How

This school district is using AI coding to develop cheaper, more customized ed-tech tools.

8 May 2026

A District Expects to Save $200K From AI-Powered 'Vibe Coding.' Here's How

This school district is using AI coding to develop cheaper, more customized ed-tech tools.

8 May 2026

A 2nd Canvas data breach causes major disruptions for schools, colleges

The Instructure-owned learning management system went offline on May 7 after a threat actor once again gained unauthorized access.

8 May 2026

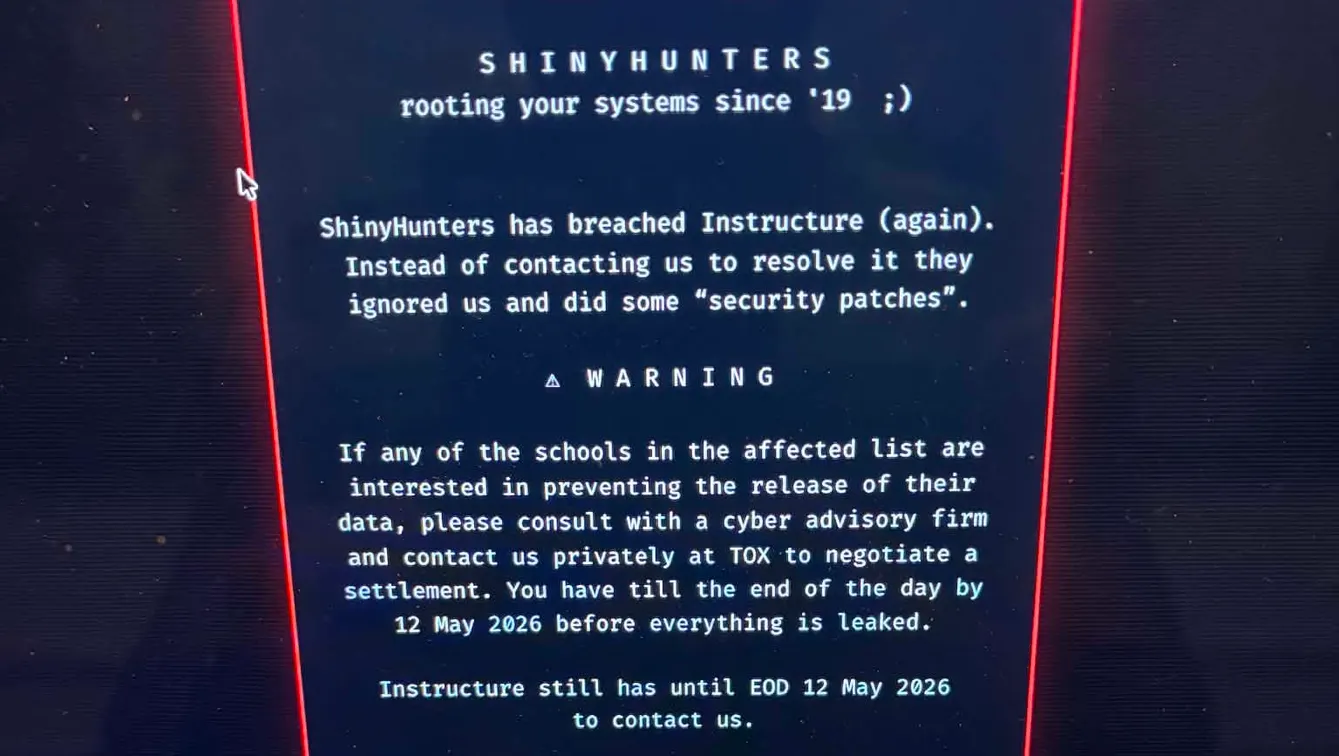

2nd Canvas data breach causes major disruptions for schools, colleges

Dive Brief: A threat actor once again gained unauthorized access into Instructure’s Canvas learning management system on May 7, the ed tech company confirmed on its website. The incident caused disruptions for students and teachers at school districts and colleges nationwide as final exam season is underway. Schools and colleges have had to offer grace periods for missed or late assignments affected by the Canvas outage. Pennsylvania State University, for example, even announced that all tests being administered at night on May 7 and all day on May 8 were canceled after the latest incident. As of May 8, Instructure reported that Canvas is back online and safe to use. But some districts and universities have temporarily disabled Canvas as the ed tech company investigates the incident. Dive Insight: This is the second cybersecurity incident to target the Canvas learning management system within 8 days, according to Instructure. The company announced the first incident on May 1 in a status update on its website. The threat actors breached Canvas by exploiting an issue on its Free-For-Teacher accounts during both incidents on April 29 and May 7, Instructure said. Because of this, the ed tech company said it is temporarily shutting down those accounts — a core part of the Canvas platform. Canvas is used for student information including grades, assignments, attendance and course materials. Virginia’s Roanoke County Public Schools issued a statement on the May 7 Canvas incident, noting that “some of our users may have seen a message today related to this incident on their computers when they logged into the Canvas system.” The district further advised students and staff to not engage with the message. Canvas users at the University of Pennsylvania also saw a message on their system from a cybercrime group known as ShinyHunters, according to The Daily Pennsylvanian, the university’s independent student newspaper. Student publications at colleges across the U.S., including Harvard University, the University of Oklahoma and multiple University of California campuses, reported similar messages. The message linked to a list of schools allegedly affected by the ShinyHunters data breaches into Canvas. It said those schools could negotiate a settlement with the cybercrime group by May 12 — the same deadline given to Instructure. During the April 29 breach, Instructure said that Canvas users at affected organizations had certain personal information exposed including names, email addresses, student ID numbers, and messages. No further data was accessed on May 7, but an “unauthorized actor made changes to the pages that appeared when some students and teachers were logged in through Canvas,” the company said. The Canvas outage and cybersecurity incident “highlights the real-life impact of failing to protect sensitive information collected by schools,” said Elizabeth Laird, director of equity in civic technology at the nonprofit Center for Democracy & Technology, in a May 8 statement. “Not only did this incident interfere with essential learning activities, it has exposed sensitive data about nearly 300 million users, including messages that could include incredibly personal information,” Laird said. At the same time, Laird pointed to the U.S. Department of Education’s Office of Educational Technology being shuttered last year. The office helped schools with responsible technology use, she said. Additionally, there have been significant funding cuts to cybersecurity supports for schools. “This is an important wakeup call that schools and the companies that work with them have legal and ethical responsibilities to safeguard students and teachers online in the same ways that they are protected in the classroom," Laird said. Instructure is not the only ed tech company to face a major data breach in recent years. Other recent high-profile cyberattacks include PowerSchool , a cloud-based K-12 software provider, and Illuminate Education , a student information system provider. The Canvas incident is a reminder that students and staff in schools have “very little control” over their mass amounts of sensitive data in ed tech platforms, said Shaila Rana, a cybersecurity professor at Purdue Global and a senior member of Institute of Electrical and Electronics Engineers, a global technical professional organization, in a May 8 statement to K-12 Dive. “It's really the asymmetry: users can't opt out, can't meaningfully audit how their data is protected, and are left absorbing the consequences when things go wrong,” Rana said. “What makes attacks on platforms like this especially damaging is the infrastructure dependency. It went down during finals week and it disrupted academic continuity across thousands of institutions simultaneously.” Meanwhile, Kate Brody, policy director at Schools Beyond Screens — the organization that pushed for Los Angeles Unified School District to limit screen time and devices in its schools — said in a May 8 statement that the Canvas incident is the “perfect example” for why schools need to “interrogate their overuse of technology.”

8 May 2026

2nd Canvas data breach causes major disruptions for colleges

The Instructure-owned learning management system went offline on May 7 after a threat actor once again gained unauthorized access.

8 May 2026

2nd Canvas data breach causes major disruptions for colleges